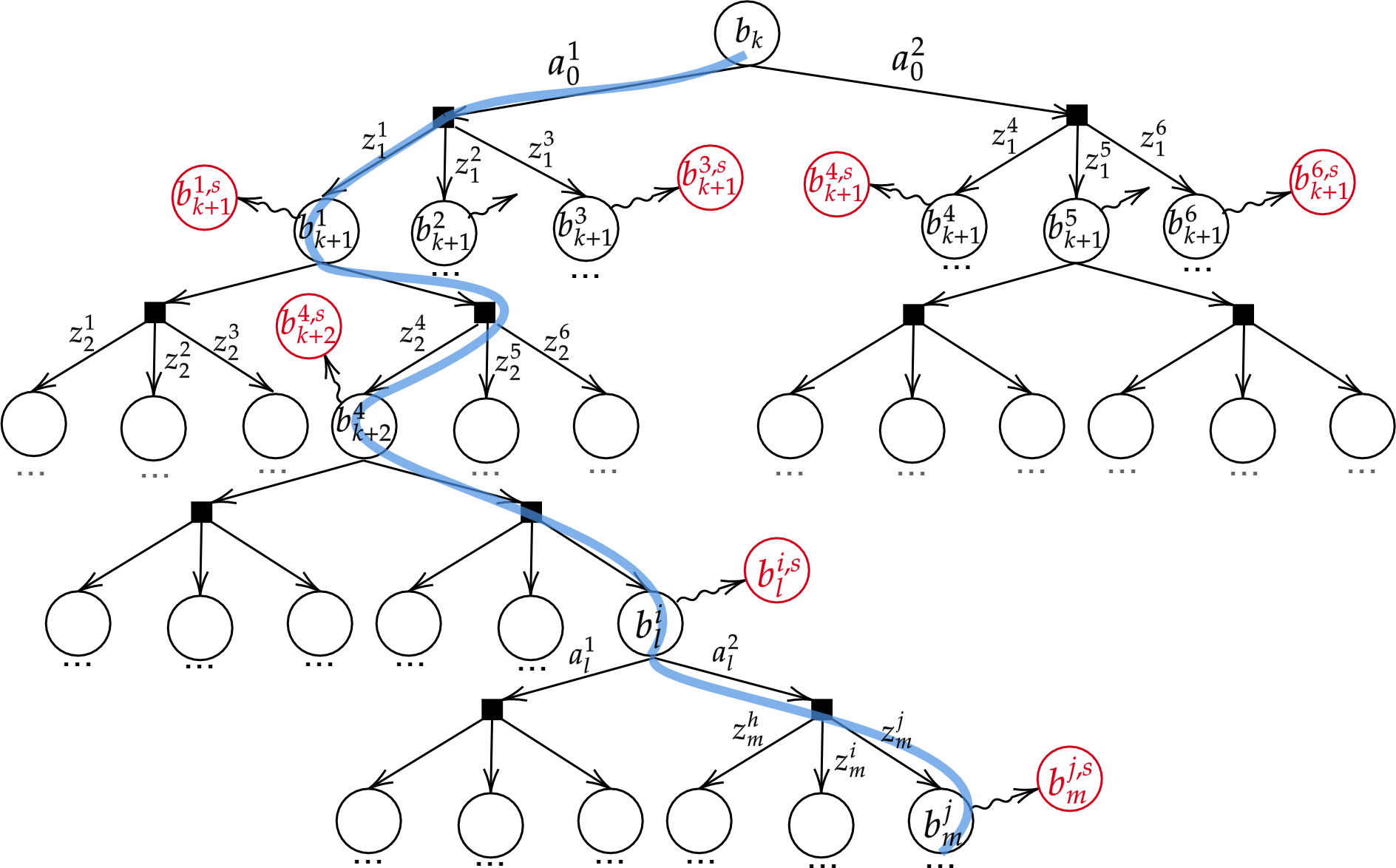

Specifically, in one of the works below we focus on belief simplification, in terms of using only a subset of state samples, and use it to formulate bounds on the corresponding original belief-dependent rewards. These bounds in turn are used to perform branch pruning over the belief tree, in the process of calculating the optimal policy. We further introduce the notion of adaptive simplification, while re-using calculations between different simplification levels, and exploit it to prune, at each level in the belief tree, all branches but one. Therefore, our approach is guaranteed to find the optimal solution of the original problem but with substantial speedup. As a second key contribution, we derive novel analytical bounds for differential entropy, considering a sampling-based belief representation, which we believe are of interest on their own.

In another work we introduced a framework for quantifying online the effect of a simplification, alongside novel stochastic bounds on the return. Our bounds take advantage of the information encoded in the joint distribution of the original and simplified return. The proposed general framework is applicable to any bounds on the return to capture simplification outcomes.

Related Publications:

Journal Articles

- M. Barenboim and V. Indelman, “Online POMDP Planning with Anytime Deterministic Optimality Guarantees,” Artificial Intelligence, 2026.

- A. Zhitnikov and V. Indelman, “Anytime Probabilistically Constrained Provably Convergent Online Belief Space Planning,” IEEE Transactions on Robotics (T-RO), 2025.

- M. Novitsky, M. Barenboim, and V. Indelman, “Previous Knowledge Utilization In Online Anytime Belief Space Planning,” IEEE Robotics and Automation Letters (RA-L), 2025.

- A. Zhitnikov and V. Indelman, “Simplified Continuous High Dimensional Belief Space Planning with Adaptive Probabilistic Belief-dependent Constraints,” IEEE Transactions on Robotics (T-RO), 2024.

- A. Zhitnikov, O. Sztyglic, and V. Indelman, “No Compromise in Solution Quality: Speeding Up Belief-dependent Continuous POMDPs via Adaptive Multilevel Simplification,” International Journal of Robotics Research (IJRR), 2024.

- T. Yotam and V. Indelman, “Measurement Simplification in ρ-POMDP with Performance Guarantees,” IEEE Transactions on Robotics (T-RO), 2024.

- M. Barenboim, M. Shienman, and V. Indelman, “Monte Carlo Planning in Hybrid Belief POMDPs,” IEEE Robotics and Automation Letters (RA-L), no. 8, Aug. 2023.

- M. Barenboim, I. Lev-Yehudi, and V. Indelman, “Data Association Aware POMDP Planning with Hypothesis Pruning Performance Guarantees,” IEEE Robotics and Automation Letters (RA-L), no. 10, Oct. 2023.

- A. Zhitnikov and V. Indelman, “Simplified Risk Aware Decision Making with Belief Dependent Rewards in Partially Observable Domains,” Artificial Intelligence, Special Issue on “Risk-Aware Autonomous Systems: Theory and Practice", Aug. 2022.

Conference Articles

- T. Shazman, I. Lev-Yehudi, R. Benchetrit, and V. Indelman, “Online Robust Planning under Model Uncertainty: A Sample-Based Approach,” in 40th Annual AAAI Conference on Artificial Intelligence (AAAI), Jan. 2026.

- D. Kong and V. Indelman, “Open-loop POMDP Simplification and Safe Skipping of Replanning with Formal Performance Guarantees,” in World Symposium on the Algorithmic Foundations of Robotics (WAFR), Jun. 2026.

- I. Lev-Yehudi, M. Novitsky, M. Barenboim, R. Benchetrit, and V. Indelman, “Action-Gradient Monte Carlo Tree Search for Non-Parametric Continuous (PO)MDPs,” in International Joint Conference on Artificial Intelligence (IJCAI), Aug. 2026.

- I. Lev-Yehudi, M. Barenboim, and V. Indelman, “Simplifying Complex Observation Models in Continuous POMDP Planning with Probabilistic Guarantees and Practice,” in 38th AAAI Conference on Artificial Intelligence (AAAI-24), Feb. 2024.

- D. Kong and V. Indelman, “Simplified Belief Space Planning with an Alternative Observation Space and Formal Performance Guarantees,” in International Symposium of Robotics Research (ISRR), Dec. 2024.

- A. Zhitnikov and V. Indelman, “Simplified Risk-aware Decision Making with Belief-dependent Rewards in Partially Observable Domains,” in International Joint Conference on Artificial Intelligence (IJCAI), journal track, Aug. 2023.

- M. Barenboim and V. Indelman, “Online POMDP Planning with Anytime Deterministic Guarantees,” in Conference on Neural Information Processing Systems (NeurIPS), Dec. 2023.

- M. Shienman and V. Indelman, “D2A-BSP: Distilled Data Association Belief Space Planning with Performance Guarantees Under Budget Constraints,” in IEEE International Conference on Robotics and Automation (ICRA), *Outstanding Paper Award Finalist*, May 2022.

- M. Barenboim and V. Indelman, “Adaptive Information Belief Space Planning,” in the 31st International Joint Conference on Artificial Intelligence and the 25th European Conference on Artificial Intelligence (IJCAI-ECAI), Jul. 2022.

- O. Sztyglic and V. Indelman, “Speeding up POMDP Planning via Simplification,” in IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Oct. 2022.

- M. Shienman and V. Indelman, “Nonmyopic Distilled Data Association Belief Space Planning Under Budget Constraints,” in International Symposium on Robotics Research (ISRR), Sep. 2022.

Technical Reports

- Y. Pariente and V. Indelman, “Online Risk-Averse Planning in POMDPs Using Iterated CVaR Value Function,” 2026.

- Y. Pariente and V. Indelman, “POMDPPlanners: Open-Source Package for POMDP Planning,” 2026.

- Y. Pariente and V. Indelman, “Accelerated Online Risk-Averse Policy Evaluation in POMDPs with Theoretical

Guarantees and Novel CVaR Bounds,” 2026.

- D. Kong and V. Indelman, “Finite-Time Analysis of MCTS in Continuous POMDP Planning,” 2026.

- T. Lemberg and V. Indelman, “Online Hybrid-Belief POMDP with Coupled Semantic-Geometric Models and Semantic Safety Awareness,” 2025.

- R. Benchetrit, I. Lev-Yehudi, A. Zhitnikov, and V. Indelman, “Anytime Incremental ρPOMDP Planning in Continuous Spaces,” 2025.

- T. Kundu, M. Rafaeli, A. Gulyaev, and V. Indelman, “Action-Consistent Decentralized Belief Space Planning with Inconsistent Beliefs and Limited Data Sharing: Framework and Simplification Algorithms with Formal Guarantees,” 2025.

- Y. Pariente and V. Indelman, “Bounding Conditional Value-at-Risk via Auxiliary Distributions with Bounded

Discrepancies,” 2025.

- M. Rafaeli and V. Indelman, “Towards Optimal Performance and Action Consistency Guarantees in Dec-POMDPs with Inconsistent Beliefs and Limited Communication,” 2025.

- Y. Pariente and V. Indelman, “Simplification of Risk Averse POMDPs with Performance Guarantees,” 2024.

- G. Rotman and V. Indelman, “involve-MI: Informative Planning with High-Dimensional Non-Parametric Beliefs,” Sep. 2022.

- A. Zhitnikov and V. Indelman, “Probabilistic Loss and its Online Characterization

for Simplified Decision Making Under Uncertainty,” 2021.

- O. Sztyglic, A. Zhitnikov, and V. Indelman, “Simplified Belief-Dependent Reward MCTS Planning with Guaranteed Tree Consistency,” May 2021.

Theses

- M. Novitsky, “Previous Knowledge Utilization In Online and Non-Parametric Belief Space Planning,” Master's thesis, Technion - Israel Institute of Technology, 2025.

- R. Benchetrit, “Anytime Incremental ρPOMDP Planning in Continuous Spaces,” Master's thesis, Technion - Israel Institute of Technology, 2025.

- A. Zhitnikov, “Simplification for Efficient Decision Making Under Uncertainty with General Distributions,” PhD thesis, Technion - Israel Institute of Technology, 2024.

- M. Barenboim, “Simplified POMDP Algorithms with Performance Guarantees,” PhD thesis, Technion - Israel Institute of Technology, 2024.

- O. Levy-Or, “Novel Class of Expected Value Bounds and Applications in Belief Space Planning,” Master's thesis, Technion - Israel Institute of Technology, 2024.

- M. Shienman, “Structure Aware Probabilistic Inference and Belief Space Planning with Performance Guarantees ,” PhD thesis, Technion - Israel Institute of Technology, 2024.

- T. Yotam, “Measurement Simplification in ρ-POMDP with Performance Guarantees,” Master's thesis, Technion - Israel Institute of Technology, 2023.

- G. Rotman, “Efficient Informative Planning with High-dimensional Non-Gaussian Beliefs by Exploiting Structure,” Master's thesis, Technion - Israel Institute of Technology, 2022.

- O. Sztyglic, “Online Partially Observable Markov Decision Process Planning via Simplification,” Master's thesis, Technion - Israel Institute of Technology, 2021.